|

|

|

|

Previous: GENOPT Up: 5.4 Global Optimization Methods Next: SIMAN |

Simulated Annealing is a stochastic computational method for finding global

extremums to large optimization problems. It was first proposed as an

optimization technique by Kirkpatrick in 1983 [102] and Cerny in 1984

[103]. The optimization problem can be formulated as a pair of

![]() , where

, where ![]() describes a discrete set of configurations

(i.e. parameter values) and

describes a discrete set of configurations

(i.e. parameter values) and ![]() is the objective function that is to be

optimized. The problem is then to find a set

is the objective function that is to be

optimized. The problem is then to find a set

![]() such that

such that

![]() is optimal.

is optimal.

The optimization algorithm is based on a Physical Annealing analogy. Physical

Annealing is a process in which a solid is first heated until all particles are

randomly arranged in a liquid state, followed by a slow cooling process. At each

(cooling) temperature enough time is spent for the solid to reach thermal

equilibrium, where energy levels follow Boltzmann distribution. As temperature

decreases the probability tends to concentrate on low energy states. Care must

be taken to reach thermal equilibrium prior to decreasing the temperature. At

thermal equilibrium, the probability that a system is in a macroscopic

configuration ![]() with energy

with energy ![]() is given by the Boltzmann distribution

is given by the Boltzmann distribution

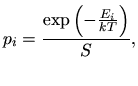

|

(5.9) |

|

(5.10) |

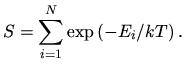

|

(5.11) |

|

(5.12) |

Optimization by Simulated Annealing involves the following preparatory steps:

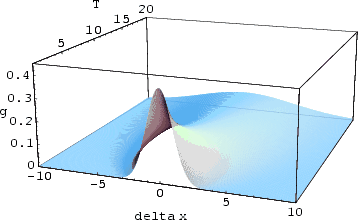

The optimization algorithm is therefore comprised of three basic functional

relationships: a probability density

![]() , where

, where ![]() is a

is a

![]() -dimensional vector, the acceptance function

-dimensional vector, the acceptance function

![]() , and the annealing

schedule function

, and the annealing

schedule function ![]() with the time step

with the time step ![]() . The optimization itself takes

place iteratively. Initially, the algorithm starts from a randomly chosen point

of which the cost is computed. Next a new point gets chosen from a random number

generator with a probability density

. The optimization itself takes

place iteratively. Initially, the algorithm starts from a randomly chosen point

of which the cost is computed. Next a new point gets chosen from a random number

generator with a probability density

![]() . In case the cost of this

point is better than the cost of the other one, the new point is taken over. In

case the cost is worse the point is accepted by a probability

. In case the cost of this

point is better than the cost of the other one, the new point is taken over. In

case the cost is worse the point is accepted by a probability

![]() . Another point is always chosen based on the best point so

far. With each iteration the probabilities for large deviations from the best

point and for acceptance decrease. This results in a behavior where distant

points are explored at the beginning (high temperature), but not generated or

rejected respectively as the temperature cools down.

. Another point is always chosen based on the best point so

far. With each iteration the probabilities for large deviations from the best

point and for acceptance decrease. This results in a behavior where distant

points are explored at the beginning (high temperature), but not generated or

rejected respectively as the temperature cools down.

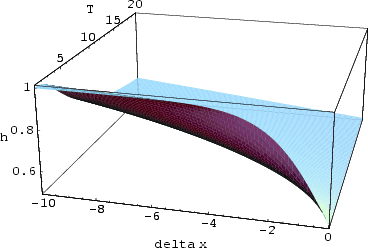

For the standard Boltzmann annealing

![]() ,

,

![]() and

and ![]() are

given by

are

given by

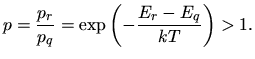

|

|

Several different annealing schemes than the one defined in the original algorithm have evolved in the past. A fixed schedule which is characterized by a fixed initial temperature, a constant temperature decrement, and a constant number of steps at each temperature is usually not practically applicable to general optimization problems since it requires several tests with different parameter values. Adaptive algorithms, which change their parameters during the evolution of the cost function are more appropriate. An adaptive algorithm was presented by Huang [106]. Here the parameters are chosen according to the mean value and standard deviation of the cost function. Also, in case the evaluation of the cost function itself is computationally expensive, a parallelized version of the Simulated Annealing algorithm is preferred over a sequential one.

2003-03-27